The Futures Dramaturg

GenAI for foresight has crossed a threshold. The profession now needs to cross one too.

The TL;DR version: AI now produces genuinely good foresight analysis. Not “good for an AI”, just good, period. The profession’s value is shifting from analytical work to holding the arc of an engagement: keeping people in the room, literally and figuratively, when the futures get uncomfortable, framing the right questions, facilitating the conversations that matter, and shepherding insight into action.

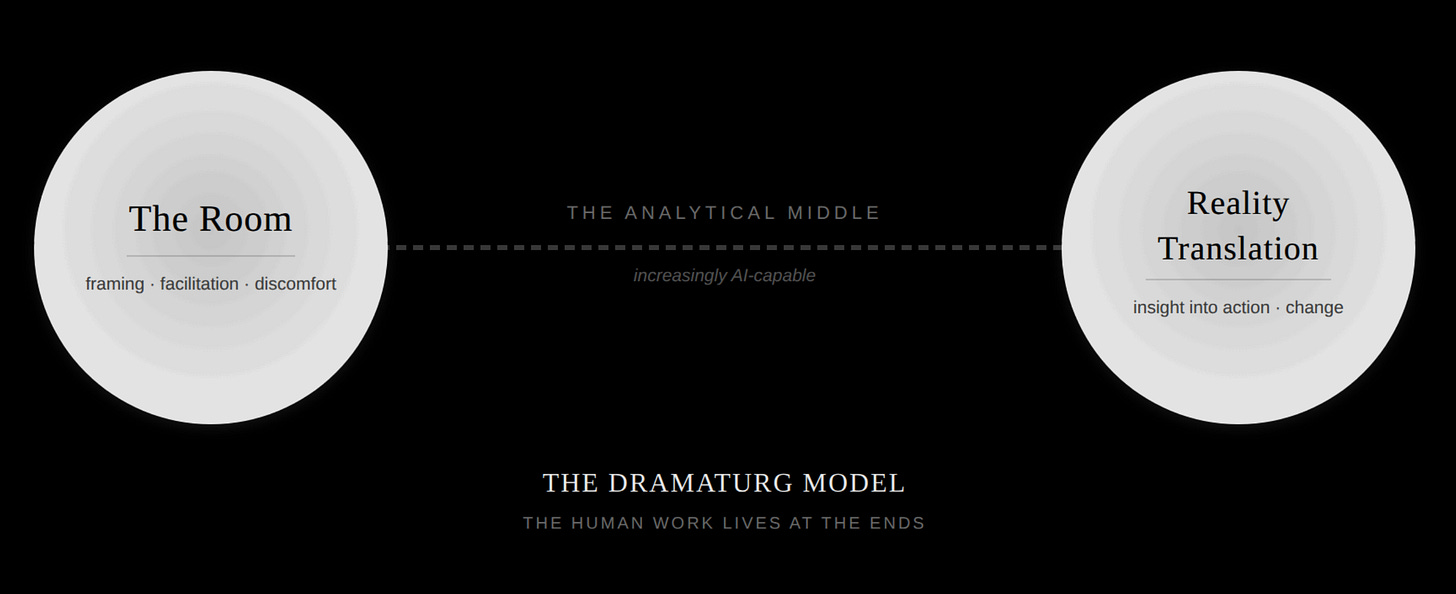

I’m calling this the dramaturg model. The analytical middle of foresight can increasingly be done with AI. The need for human work is stronger at the ends; the room where people confront uncomfortable truths, and the organisational reality where change actually happens. That’s where practitioners need to invest.

This shift is underway now, and it will keep moving.

I’ve spent considerable time over the past few years using generative AI for strategic foresight work, constantly trying to keep my finger on the pulse of what these tools are capable of in my domain(s). Running full engagement cycles: signal scanning, driver analysis, forecasting, scenario development, consequence mapping, cross-impact analysis, resilience assessment, strategic transition frameworks, future personas, tangible artifacts.

The kind of work that typically involves a team, a timeline measured in weeks or months, and a substantial budget.

Recently, something has shifted, and I claim that the tools have silently crossed the ‘good enough’ threshold for so many things that we foresight professionals need to recalibrate what we are here for.

I’m not alone in noticing. A 2025 survey by the OECD and World Economic Forum found that two-thirds of foresight practitioners are already using AI in their work, with most reporting significant time savings and expanded analytical capacity. The shift is underway.

In short, the output is now good. Scenarios that are genuinely distinct. Cross-impact matrices that reveal systemic patterns I hadn’t consciously assembled. Personas that are emotionally compelling. Strategic recommendations that are specific, actionable, and internally consistent across a body of work that grows more interconnected with each exercise, with a coverage and structure that feels almost suspiciously complete.

What does this mean for us?

I am a foresight professional. This is a significant part of my professional identity and livelihood. And I have watched a machine do a version of my work - not all of it, but a substantial and valuable portion - at a speed and consistency I could never match alone. That excited me, but it also scared me a little. I think the fact that it scared me is important.

(In an earlier draft of the above paragraph, I also had the words ‘not the hardest parts’ in what parts of my work AI cannot do, but I’m conscious that may have been a comforting lie, a psychological crutch to still feel special. Maybe AI is doing the hardest parts.)

If you’re a foresight practitioner reading this, you’re likely in one of several positions. You may have experimented with AI tools for foresight work already and formed a view, in which case I’d urge you to revisit that view, because the capabilities are shifting faster than most assessment cycles can track. And when you do, check yourself for motivated reasoning — are you exploring honestly, or looking for confirmation of a conclusion you’ve already reached?

Specifically: the jump from Anthropic’s Claude Opus 4.5 to Opus 4.6 has been, in my experience, a deceptively large shift, and in my experience the single most significant threshold-crossing upgrade in AI capabilities for foresight work I’ve encountered. If you tried this even six months ago and found it wanting, your conclusions have expired. Try again.

You may not have experimented yet but hold a position on whether AI is relevant to your practice. If so, I’d ask you to hold that position lightly for the length of this article. This is a domain where views need reconsidering at a cadence most of us aren’t comfortable with, because like it or not, things are changing fast.

Foresight professionals of all people should know this. How often do we apply that knowledge to ourselves?

You may also have principled objections to AI; ethical concerns about training data, labour displacement, environmental cost, concentration of power. I respect that position. Those concerns are legitimate and they deserve serious engagement. Nothing in this article argues that you’re wrong to hold them.

What I would say is that the capabilities are arriving regardless of whether any individual practitioner adopts them, and that understanding what AI can do in your domain is valuable even, perhaps especially, if you choose not to use it.

Foresight has always been about understanding even the futures you might not want.

Or you may have already composed a mental list of reasons this doesn’t apply to you on the assumption that your work is somehow exempt. If so, I want to gently suggest that the ability to construct reassuring narratives about why uncomfortable futures won’t materialise is a failure mode we’re supposed to help our clients avoid.

We’re not exempt

There is some level of professional illusory exceptionalism that can be found from many, if not most, domains. In foresight work it goes: “Yes, AI will transform accounting, law, journalism, and customer service — but our work is too creative, too human, too nuanced for machines. This is the one domain where human insight is critically important.”

This story is seductive precisely because it contains a kernel of truth.

Parts of foresight work are deeply human. But the kernel is not the whole crop.

Henrik Skaug Sætra, in a recent preprint, argues that dismissing AI as “stochastic parrots” has become a professional comfort blanket, a way to avoid engaging with how these systems are actually reshaping work.

The critique that gave the phrase stochastic parrots its power was important and necessary. But as a default stance, Sætra argues, it risks becoming a way to not see what’s happening. I think foresight has its own version of this comfort blanket: the belief that our work is too creative, too contextual, too human for AI to meaningfully contribute.

It’s a comforting story.

It’s also increasingly wrong.

Nick Foster, in Could Should Might Don’t, critiques the shiny, consequence-free variety of futures work he calls “Could” futurism. His call for “mundane futures” — more time in the messy middle where people actually live — resonates deeply.

I want to make a parallel call: mundane practice. Less grand theorising about AI’s role in foresight. More honest accounting of what happens when you sit down and do the work with it.

Let me be specific. If you write about megatrends, macro forces, or driving forces of change for a living — AI already does this at least as well as you do for most contexts, and in many cases better. It synthesises across wider source material, maintains consistency across frameworks, and knows the client context better than any random foresight professional. This isn’t capability that’s coming soon. It’s here.

The barbell of human foresight engagement

What I discovered in running full foresight cycles with GenAI is that the human contribution isn’t evenly distributed. It follows a sort of a barbell, or U-pattern: heavy human involvement at both ends, AI-dense in the middle.

The opening end is orientation and framing. This is the work of getting a room of executives to admit what they’re actually afraid of, and for all of them to gain honest situational awareness. It’s about reading the room; noticing when the CFO checked out or the CEO leaned in, and choosing which provocation to deploy based on who flinched.

Some of the most powerful foresight tools work precisely because they happen in a physical room with actual humans. An orientation exercise can transform a group’s situational awareness in ways that no document, no report, and no AI-generated scenario can replicate.

When you watch a leadership team physically map out the forces shaping their world and their views of them, it becomes collective sense-making. People read each other’s body language, pick up on hesitations, build shared understanding through the friction of disagreement. The visceral discovery when you see your leadership team doesn’dest, in fact, agree on something everyone thought was a shared truth, or the quiet executive who suddenly speaks up and reframes the entire conversation are moments that don’t happen in a chat interface.

Physical presence still has power, and rooms can be efficient vehicles for mindset shifts. I’ve watched a team walk in thinking they were there to discuss a five-year technology plan and walk out realising they had a workforce crisis they hadn’t named. That shift is possible because someone was in the room facilitating, probing, creating the conditions for honest conversation.

AI can generate the analytical inputs that make those conversations richer. It cannot be the person standing at the whiteboard when the room goes quiet and someone finally says the quiet thing out loud.

Will AI-mediated facilitation eventually enter this space? Almost certainly. Immersive simulation environments are already a thing. Robots are coming. But I doubt we’ll be comfortable having machines run deeply human exercises like collective orientation and values negotiation for some time.

The technology may arrive before the trust does, and in facilitation, trust is the technology.

The middle is analysis, synthesis, and generation. Signals through scenarios through consequences through strategy.

From an effort perspective, this used to be the bulk of many foresight projects — and this is where AI excels. GenAI models can now maintain coherence across a massive body of interconnected analysis, a dozen-plus exercises in consistent relationship with each other.

The quality I could match, potentially even exceed, given enough time. What I couldn’t match was the quality at that volume and speed, sustained across every exercise in the cycle, with a consistency no team could replicate in the same timeframe.

And also, when is there ever ‘enough time’?

The closing end is action and implementation, where things get translated back into the physical reality of the organization. Building roadmaps. Catalysing change.

All this requires what the Greeks called metis — practical wisdom, the knowledge of how things actually work in this specific organisation with these specific humans and their specific histories, grudges, and ambitions. Who needs to be in the room to make this decision stick? Who will quietly sabotage it if they weren’t consulted? Which board member needs to feel like this was their idea? That knowledge lives in relationships and corridor conversations and decades of institutional memory.

No AI has access to all that, and providing it with enough context to be genuinely helpful here is itself a substantial act of judgment.

An important caveat: I don’t think the barbell is a permanent shape. It’s the shape of the work right now. The middle is already highly AI-capable; the ends are still more firmly human. But the boundary will keep moving. AI will get better at facilitating structured conversations. It will gain access to more organisational context. The human ends of the barbell will compress over time — not disappear, but narrow. Recognising that the barbell is a snapshot, not an equilibrium, is itself a foresight act. Plan for today’s shape; don’t assume it’s tomorrow’s.

Points of disagreement

I’m not the first or the only one thinking about the changing nature of foresight work, quite obviously. The growing body of work on AI in foresight is encouraging and, I think, incomplete in some important ways.

A recent case study from Queensland University of Technology, working with the Queensland Government, documented a 60-70% reduction in staff hours through AI-augmented scenario development. They doubled their scenario output. They completed analysis in weeks rather than months. These are real gains and they match my experience. Where I depart from the efficiency framing is this: the most important thing wasn’t going faster. It was going deeper. More exercises than time constraints typically allow. Branches I wouldn’t have explored. Connections I wouldn’t have seen.

Speed is the obvious benefit. Depth is the transformative one.

The OECD/WEF survey identifies three maturity levels for AI in foresight: basic analysis augmentation, creative sparring partner, and fully integrated systems. What strikes me is that this framework describes integration depth but not role transformation. You can be at the highest maturity level and still think of yourself as an analyst who uses AI tools. That’s not the shift I’m describing.

What I’m describing is something more fundamental: a shift in what the foresight professional is.

There’s also a strand of literature that frames AI’s contribution in expansive, almost breathless terms like “analytical supremacy,” “epistemological pluralism,” AI that dynamically models complex interdependencies and simulates emergent cascading effects. Some of this is real. But these accounts describe AI as upgrading everything more or less uniformly. My experience says the opposite. The upgrade is profoundly uneven; the jagged edge is real even in foresight work. The analytical middle leaps forward. The human ends remain stubbornly, beautifully resistant.

That unevenness is the most important finding, and acknowledging that it too will shift over time is part of honest practice.

A finding from the QUT study deserves particular attention: AI hallucinations occasionally served as creative provocations in scenario work. I’ve seen this too; in divergent ideation, we like telling people there are no bad ideas, but do we extend the same charitable principle to our AI tools? This only works if the practitioner has enough independent expertise to distinguish a genuinely interesting provocation from confident nonsense.

Sætra draws on Stephen Barley’s sociology of work to make a distinction that matters here: between substitutional and infrastructural technological change. Substitution is when a new tool replaces an old one in roughly the same role.

Infrastructure rewires how the work is organised.

Most commentary about AI in foresight treats it as substitutional; a faster way to do scenario analysis, a better research assistant. My experience suggests it’s infrastructural. It doesn’t just speed up the middle of the barbell; it changes the relationship between the parts. When the analytical work that used to take weeks happens in hours, everything upstream and downstream reorganises around that new reality: client expectations shift, engagement design changes, the skills that matter are different, the bottleneck shifts.

Sætra identifies a historical pattern that should concern us: when a bottleneck moves from human to machine, the work doesn’t vanish, but the power embedded in that work does. The typesetter didn’t disappear overnight when the Linotype arrived. But the bottleneck moved, and with it went the leverage.

If foresight’s analytical middle follows this pattern — and I think it’s beginning to — then the question isn’t whether the work gets done. It’s who controls how it gets done, and what happens to the practitioners whose expertise was built on doing it. (Only half-facetiously I would suggest one place the bottleneck shifts to is calendar-wrangling of the stakeholders)

This connects to the deskilling concern that bothers me more generally.

If AI handles the analytical middle, where do junior practitioners develop the pattern-recognition skills that make senior expertise valuable? It’s the same tension aviation discovered decades ago — hence the name of this newsletter — when autopilot made flying safer but slowly eroded the manual skills pilots needed when the automation failed.

I don’t have a clean answer. But naming it honestly is more useful than pretending it doesn’t exist.

And I think the honest next question is: could we stop this even if we wanted to?

Almost certainly not. Foresight has always been a tough sell. It’s been seen as expensive, slow, its value diffuse and hard to measure. No client is going to voluntarily pay for the slow human-only version of analytical work to preserve our apprenticeship pipeline.

That’s asking the market to subsidise practitioner skill development out of kindness. Markets don’t do that.

There’s a deeper reason too. The acceleration isn’t just a threat to practitioner skills; there’s an argument to be made that it’s a genuine necessity.

A year-long foresight project on AI-adjacent topics is already obsolete by the time it ships. The world, in at least some domains, is moving faster than traditional foresight cycles can track. The profession had a speed problem before AI arrived as a tool, and now the same technology causing the acceleration is also the only plausible solution to it. If we can’t keep pace with the rate of change, our work is just wrong.

And there’s a genuine good buried in the disruption. Foresight has been a luxury good, available primarily to large organisations with large budgets. If AI makes meaningful futures work accessible to a small nonprofit, a local government, or a startup that could never have afforded a team engagement, that’s not a downside with a silver lining.

The counterweight is that sense-making has a biological clock. You can generate a cross-impact matrix in minutes, but the leadership team still needs time to sit with what it reveals. The uncomfortable finding that your core business model is a first-curve asset heading for decline needs to marinate.

The conversation where someone finally names the thing everyone was avoiding can’t be scheduled for 2:15 PM between the scenario generation and the action planning. Human processing time isn’t a bottleneck to be optimised. It’s where the value is actually created. Speed the analysis, yes. But don’t mistake faster generation for faster understanding.

The Dramaturg

All of this points to a specific evolution in the foresight professional’s role. I’ve been reaching for the right word for it. Conductor, curator, orchestrator all come to mind, but I landed on dramaturg as the best option. The role has elements of all the three before-mentioned roles, but dramaturg captures something more.

In theatre, the dramaturg holds the arc of the production. They don’t write every scene, but they know which scene needs to land. They don’t perform, but they understand what the audience needs to feel and when. They’re the bridge between raw creative material and its impact on the people who experience it.

That’s what I was doing in my AI-assisted foresight work. Making curatorial decisions constantly. Which exercise next? Do we have enough pre-work for this one? Is the body of divergent work sufficient to make the convergent exercise meaningful? Those decisions drew on years of practice; knowing what good foresight output feels like, and knowing when something’s missing before I could fully articulate why.

The dramaturg knows that the cross-impact matrix is analytically elegant but the persona of a woman managing her husband’s heart failure from a trailer in rural Mississippi is what will actually change behaviour in the boardroom. They design the emotional journey from comfortable assumptions through productive discomfort to committed action.

That design sense doesn’t quite automate. Yet.

This is just a snapshot

I’m wary of making confident predictions with specific timelines, that would be the kind of shiny futurism this piece is arguing against. But I can describe the direction.

The analytical middle of foresight is moving rapidly toward AI competence. Signal scanning, driver analysis, scenario generation, consequence mapping, cross-impact analysis, these are already being done well, and the capabilities are improving visibly.

Two-thirds of practitioners already use AI for this work, and even within cautious government environments, the productivity gains are too significant to ignore.

The creative and communicative layer is shifting next. Personas, artifacts from the future, the narratives that make abstract foresight visceral, these were among the strongest outputs in my work. As more practitioners discover this, the portion of an engagement that requires direct human generation will shrink further.

What will remain human longest is the relational work at both ends: the initial framing and the closing action, because these depend on physical presence, institutional knowledge, and trust between people who share stakes.

The profession may bifurcate. Practitioners who can articulate what they add beyond analysis, the dramaturgs, will find their work more valued, not less. Practitioners whose value proposition was primarily analytical rigour will face increasing competition from clients who can do that themselves.

This is the same structural shift that has reshaped graphic design, copywriting, coding, and data analysis. Foresight isn’t exempt.

I don’t know exactly how far this goes. I’ve run full engagement cycles in single sessions that produced output that was genuinely suitable for real client work. That would have been inconceivable two years ago.

What’s inconceivable today may be routine in another two.

Foresight professionals, of all people, should know better than to assume the present pace is the permanent pace.

So what?

Stop treating AI as either saviour or threat. Start treating it as a tool you need to learn to use well, the way you learned to use scenario frameworks and driver analysis and futures wheels and cones.

Even if your ethical stance is not to use any AI tooling, understanding what it can do in your domain is prudent due diligence.

Run the tools with it. Notice where it surprises you. Notice where it falls flat. Build your own sense of where the barbell sits for your practice, your clients, your domains.

Invest in the parts of your practice that live at the ends: reading rooms, framing questions, designing emotional arcs, shepherding action through institutional resistance.

Get better at being a dramaturg.

Engage with the discomfort rather than narrating around it. If you’re a foresight professional and the idea of AI doing your analytical work well doesn’t make you at least a little uneasy, you either haven’t tried it or you’re not being honest with yourself.

That unease is a weak signal. Use it the way you’d use any other weak signal: as evidence that something is changing and your current model may need updating.

It hits different when the weak signal is about your work than the client’s business, doesn’t it?

Get used to it. You if anyone have the tools to deal with that discomfort.

And acknowledge that even the barbell model I’ve described here is a snapshot, not a destination. The human ends may narrow. The middle may expand. New capabilities may emerge that don’t fit neatly into any current framework.

The shape of foresight practice in five years will surprise us, and our ability to be surprised by it, and adapt, is probably the most durable professional skill we have.

There’s a question I haven’t addressed here: what happens when clients figure out this capability shift? When they arrive having already run the scenarios, already generated the personas, and they say they only need you for two days in the room instead of two months of engagement? That’s a different piece, but if the barbell model is right, it’s not the threat it first appears to be. More on that later.

None of us trained for this version of the profession. But then, nobody trains for the exact futures they get.

That’s always been the point.

nb. I am using the terms AI and GenAI somewhat interchangeably here for brevity. I am quite aware of the wildly different flavors that get clumped under the AI umbrella, but this isn’t the essay to sort that out.

PS. A necessary caveat: the results I describe didn’t come from lazy prompting. They came from sustained, systematic work, including carefully constructed Claude Skills, lengthy custom instructions, and iterative refinement. The tools are capable of genuinely good foresight work, but they don’t produce it by default. How you use them matters enormously.

Nick Foster’s Could Should Might Don’t is published by Particular Books (Penguin). My review is here.

Henrik Skaug Sætra’s “The Tyranny of the Stochastic Parrot: How AI Critique Became a Way to Not See What’s Happening” is a 2026 preprint from the University of Oslo. https://papers.ssrn.com/sol3/papers.cfm?abstract_id=6249318

World Economic Forum/OECD (2025). AI in Strategic Foresight: Reshaping Anticipatory Governance. https://www.oecd.org/en/publications/ai-in-strategic-foresight_aa573076-en.html

Picavet, E. et al. (2025). “Human–Machine Collaboration for Strategy Foresight: The Case of Generative AI.” Public Administration Review. Queensland University of Technology. https://eprints.qut.edu.au/261336/

Soeiro de Carvalho, P. (2024). “How GenAI Will Transform Strategic Foresight.” IF Insight & Foresight / Hong Kong Institute of Futurologists & Foresight Analysts. https://hkifoa.com/wp-content/uploads/2024/12/how-genai-transform-strategic-foresight.pdf

THANK you for this thought provoking essay.

Some highlights for me:

The focus on human judgment: "This only works if the practitioner has enough independent expertise to distinguish a genuinely interesting provocation from confident nonsense." Pretty sure we need to prepare for an absolute deluge of confident futures nonsense.

The role of human facilitation and collective sensemaking...in human time. Points to one of my favorite topics - the "new physics of collective sensemaking." (https://journals.sagepub.com/doi/10.1177/26339137251328909 and https://journals.sagepub.com/doi/10.1177/26339137251367733#core-bibr1-26339137251367733-1 and https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4751774).

The potential for democratization of futures work and the arrival of the kinds of simulation capabilities that we have been talking about for years. Now is the time to not just be a dramaturg but perhaps also a set designer, building the props for people to stage their own plays.

The call to action: "Invest in the parts of your practice that live at the ends: reading rooms, framing questions, designing emotional arcs, shepherding action through institutional resistance." This makes me want to get a lot better at the parts of facilitation that feel most challenging for me.

The proof will lie in the pudding, as they say, and I look forward to exploring more AI-generated outputs. Since the practice is so subjective, my guess is that each of us will have different places where we feel the outputs fall flat, sensationalize, or turn into mushy slop.

Thank you! This is the best analysis I have read of what AI may or may not do and the impact on us humans. I wish more people will read it instead of focusing on fears and threats.